Hi, I’m Zach Seward, the Editorial Director of AI Initiatives at The New York Times. Before this role, I co-founded Quartz, a business news startup known for its experimental digital products. I’m here to discuss the evolving landscape of news, specifically how artificial intelligence is reshaping journalism in ways both promising and perilous. While the traditional image of news might still evoke the rumble of News Print presses, the reality is that AI is rapidly becoming a crucial tool in how news is gathered, produced, and delivered in the digital age.

I’m relatively new to The Times, so today I won’t delve into our specific projects. Instead, I want to offer a survey of the current state of AI in journalism, highlighting both its missteps and its inspiring successes. My aim is to extract lessons from these examples, shaping how we think about AI’s role in newsrooms like The Times and across the industry as a whole. These reflections represent my personal viewpoint but are indicative of my approach to integrating AI into journalistic practices, moving beyond the limitations of traditional news print and embracing the digital future.

AI news that's fit to print

AI news that's fit to print

We’ll begin by examining the failures – the “bad and the ugly” – because I believe these mistakes offer crucial learning opportunities. However, the majority of our time will be dedicated to exploring truly remarkable and inspiring applications of artificial intelligence in journalism. We’ll look at both established machine learning models, adept at identifying patterns in vast datasets, and the exciting potential of transformer models, or generative AI, to enhance the work of journalists and better serve our readers in this digital era, a stark contrast to the static nature of news print.

When AI Journalism Goes Wrong: Lessons in Misuse

The CNET Case: Automation Without Oversight

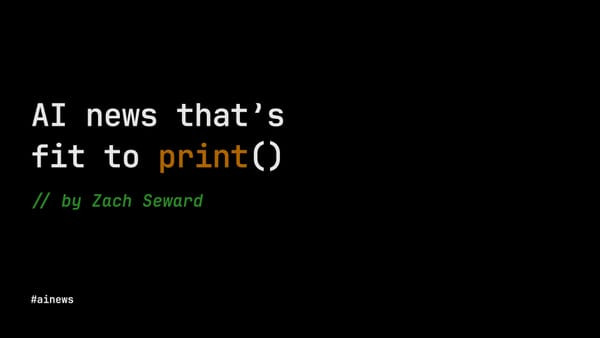

Let’s start with some cautionary tales. Last year, CNET, a tech news website under Red Ventures, was caught using “automation technology” to publish financial advice articles on topics like CD investments and bank account closures. These articles, attributed to “CNET Money Staff,” were generated by AI.

CNET AI news article

CNET AI news article

The AI-generated content was plagued with errors – classic “hallucinations” from large language models – requiring numerous corrections. Plagiarism was another issue, a common pitfall when generative AI is used to create entire articles from scratch. Months later, humans revised and updated these articles, their names and photos proudly displayed under the heading “Our experts.”

This instance, and others like it, often occur with advisory content. Some publishers view this type of content as secondary to “real” journalism, primarily intended to drive affiliate revenue through product recommendations. The focus shifts from the reader’s genuine interest to profit, making it seem acceptable if the AI-generated content is subpar. This pattern unfortunately repeats itself across the digital news print landscape.

Gizmodo and The Inventory: Efficiency Over Accuracy

G/O Media, where I briefly worked after selling Quartz, also experimented with generative AI, believing it could replace journalists for greater efficiency. It’s important to note that these events occurred after my departure.

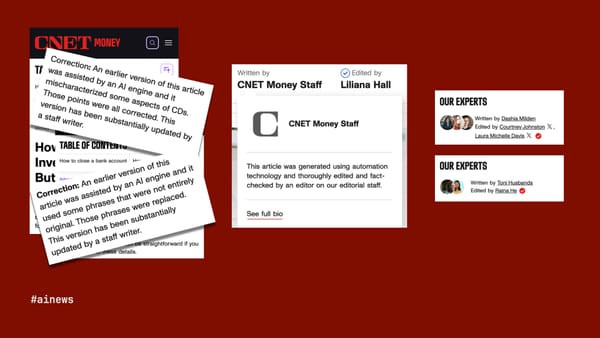

Gizmodo Star Wars AI error

Gizmodo Star Wars AI error

Gizmodo, their flagship site, tasked an LLM, under the byline “Gizmodo Bot,” with creating a chronological list of the Star Wars franchise. The result? A glaring factual error in the timeline, quickly spotted and ridiculed by Star Wars fans online. The bot failed at its single, straightforward task.

While G/O largely retreated from AI experiments after this public embarrassment, their product recommendation site, The Inventory, continues to publish AI-generated articles. These articles promote deals, sometimes absurd ones like heavily discounted textbooks or questionable health products. The “Inventory Bot” even refers to itself as an entrepreneur and claims products are “tested and trusted,” while a small disclaimer at the end vaguely states the content “may produce inaccurate information.” The pursuit of affiliate revenue seems to overshadow journalistic integrity, a far cry from the trusted reputation of traditional news print.

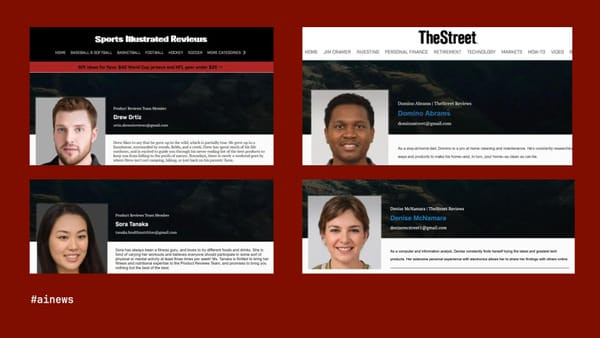

Sports Illustrated and The Street: Fabricated Authors and False Reviews

Arena Group, licensing Sports Illustrated and owning The Street, engaged in similar practices with AI-generated product reviews. However, they added another layer of deception: fake authors. “Drew,” “Sora,” “Domino,” and “Denise,” complete with stock photo profiles, were presented as real reviewers when they were entirely fabricated. This was a lie built upon layers of falsehoods.

Sports Illustrated fake authors

Sports Illustrated fake authors

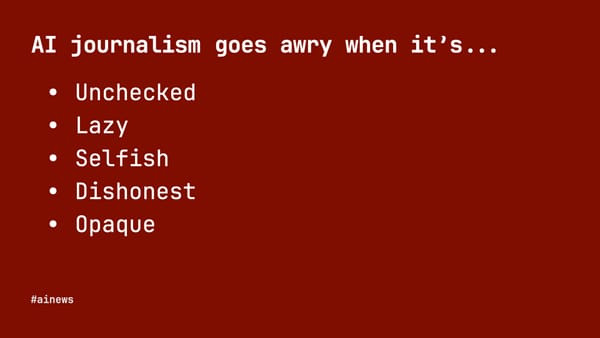

Lessons from AI Journalism Failures

I’m not aiming to simply criticize these companies, although their actions warrant it. These examples offer valuable lessons. Common threads run through these failures: unchecked AI-generated content, a lazy approach to implementation, purely self-serving motivations focused on profit, and dishonest or opaque presentation. This misuse contrasts sharply with the principles that have historically underpinned quality news print.

AI journalism awry

AI journalism awry

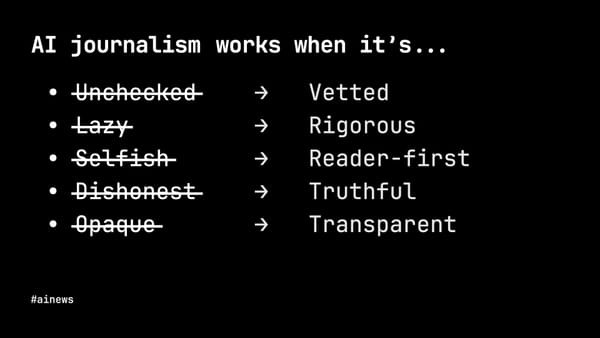

AI journalism works when

AI journalism works when

For AI journalism to succeed, it requires rigorous vetting. The driving force must be serving readers’ best interests. And fundamentally, the core principles of journalism – truth and transparency – must be upheld. Moving forward, journalism must adapt to the digital age without sacrificing the values that made news print a trusted source of information for so long.

Fortunately, numerous examples demonstrate successful AI applications across various publishers, utilizing both traditional machine learning and generative AI ethically and effectively. Let’s explore these inspiring use cases.

Recognizing Patterns with Machine Learning: Enhancing Investigative Journalism

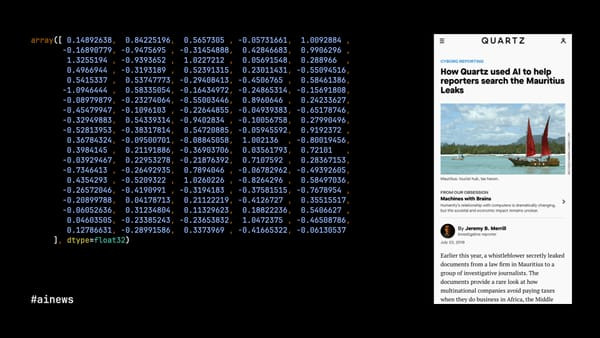

Quartz and the Mauritius Leaks: Control-F on Steroids

I’ll begin with a project from my time at Quartz in 2019. We collaborated with the International Consortium of Investigative Journalists (ICIJ), who had received a massive leak of documents from law firms specializing in offshore wealth concealment – the Mauritius Leaks. The sheer volume of data was overwhelming for manual review.

Mauritius Leaks Quartz AI

Mauritius Leaks Quartz AI

My colleagues Jeremy Merrill and John Keefe developed a tool, essentially “control-F on steroids.” ICIJ experts identified key passages in a sample of documents related to tax avoidance. Jeremy then converted the text of all documents into vectors using a model called doc2vec. This transformed the Mauritius Leaks into a multidimensional space, allowing us to identify documents similar to the initial samples, even if not identical. The model could detect patterns invisible to human reviewers, making the analysis of 200,000 technical documents possible and efficient. This was a significant leap beyond traditional methods of analyzing news print archives.

Grist and The Texas Observer: Uncovering Abandoned Oil Wells

The Texas Observer and Grist, an environmental news non-profit, used similar techniques in their investigation of abandoned oil wells in Texas and New Mexico. The number of wells, officially and unofficially abandoned, was in the tens of thousands, making physical inspection impossible.

Texas Observer Grist oil wells AI

Texas Observer Grist oil wells AI

Clayton Aldern at Grist employed statistical modeling, a form of machine learning, to compare conditions around officially listed abandoned wells with a larger dataset of potential sites. They discovered at least 12,000 additional abandoned wells in Texas that were not officially documented, revealing a hidden environmental issue through data analysis, a type of investigation unimaginable in the era of solely news print based journalism.

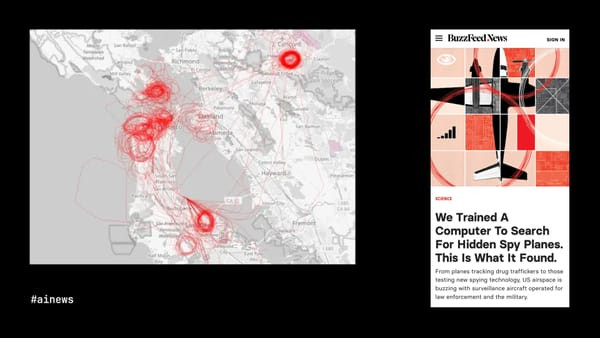

BuzzFeed News: Spotting Spy Planes in Flight Data

BuzzFeed News (formerly) showcased another innovative application. Peter Aldhous utilized publicly available flight data and a simple insight: circling flight patterns often indicate surveillance.

BuzzFeed News spy planes AI

BuzzFeed News spy planes AI

Aldhous generated images of flight patterns and used a machine learning model trained on pattern recognition to identify these circular patterns. The model’s ability to detect “kinda-circles” and “almost-circles” was crucial. The investigation uncovered widespread surveillance over major US cities by both government and private aircraft, demonstrating AI’s ability to analyze complex datasets and reveal hidden activities, a far cry from the limitations of traditional news print reporting.

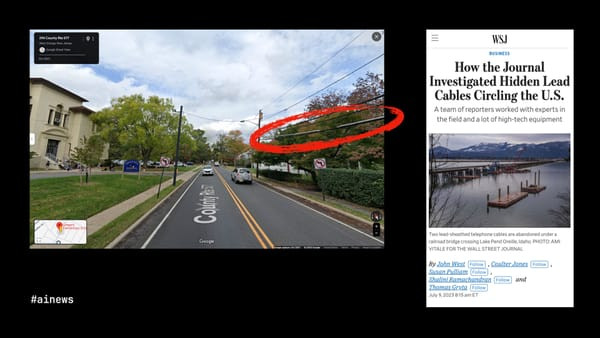

The Wall Street Journal: Exposing Lead Cabling with Image Recognition

Image recognition is a powerful tool in investigative journalism. The Wall Street Journal used it to investigate the pervasive presence of lead cabling in the US, long after the dangers of lead were known.

Wall Street Journal lead cables AI

Wall Street Journal lead cables AI

John Bebe-West led an investigation using Google Street View images near schools in New Jersey. While to the human eye, these images appear ordinary, a machine learning model trained to recognize lead cabling identified a public health crisis. The Journal found extensive lead cabling in public areas nationwide and conducted on-site tests, confirming dangerously high lead exposure levels. AI allowed for a virtual presence on every street corner, an impossible feat for human journalists alone, and a method far beyond the scope of traditional news print investigations.

The New York Times: Satellite Imagery and Gaza Bombing

Satellite imagery, now readily available in near real-time, provides another powerful tool. My colleagues at The New York Times have used it for incredible investigations. For example, during the Israel-Gaza conflict, the Visual Investigations team sought to document the bombing campaign in southern Gaza, the supposed safe zone. Official accounts of bombing intensity were lacking, but bombs leave craters.

The Times developed an AI tool to analyze satellite imagery of South Gaza for bomb craters. The AI identified over 1,600 potential craters, each manually reviewed to eliminate false positives. Craters exceeding 40 feet in diameter, indicative of 2,000-pound bombs, were measured. Ultimately, 208 such craters were confirmed, revealing the pervasive threat to civilians.

Ishaan Jhaveri led this work. It’s a story that could not have been told without AI, combined with journalistic expertise. This type of real-time, visual investigation represents a new frontier in journalism, moving far beyond the static images of news print.

Machine Learning: Pattern Recognition for Modern Journalism

Machine learning excels in journalism by recognizing patterns invisible to the human eye alone. Patterns in text, numerical data, visual representations of data, street-level photos, and satellite imagery.

Machine learning pattern recognition

Machine learning pattern recognition

While these capabilities have existed for some time, the latest machine learning technology – LLMs and generative AI – offers equally inspiring possibilities. If traditional machine learning excels at pattern recognition in data, generative AI’s strength lies in creating patterns, opening new avenues for journalistic innovation beyond the limitations of news print.

Creating Sense with Generative AI: Summarization and Structure

The Marshall Project: Summarizing Prison Book Banning Policies

The Marshall Project, a non-profit focusing on the US justice system, investigates book banning in state prisons. They maintain a database of banned books and acquired official book banning policies from 30 state prison systems. These policies are often lengthy and complex.

Marshall Project prison book bans AI

Marshall Project prison book bans AI

To make these policies accessible, The Marshall Project journalists identified key sections in each document. Andrew Rodriguez Calderón then used OpenAI’s GPT-4 with specific prompts to generate readable summaries of each policy. Journalists reviewed these summaries before publication. While summarizing one policy manually might be feasible, using an LLM allowed them to efficiently summarize policies across 30 states, a task that would be incredibly time-consuming using traditional methods associated with news print production.

Watchdog Reporting in the Philippines: Decoding Bureaucracy

Jaemark Tordecilla, a Filipino journalist and Nieman Fellow, achieved similar success using LLMs to decode bureaucratic documents. He built a custom GPT to summarize audit reports from Philippine government agencies. These reports often contain evidence of corruption but are long and difficult to understand. Filipino journalists now use Jaemark’s tool to identify potential corruption and generate leads for investigations, transforming complex government documents into actionable news print stories.

Philippine journalism AI audit reports

Philippine journalism AI audit reports

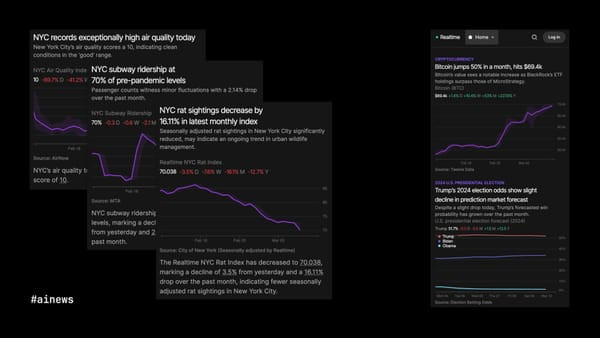

Realtime: Automated Data Journalism with Human Context

Many associate generative AI in journalism with fully automated news sites, like the problematic examples we discussed earlier. However, Realtime, created by Matthew Conlen and Harsha Panduranga, is a fully automated site that provides genuine value while acknowledging its limitations and using AI judiciously.

Realtime automated news site AI

Realtime automated news site AI

Realtime charts regularly updated data feeds from financial markets, sports, government records, and more. The data and charts are fully automated, highlighting interesting data points like outliers or significant trends. LLMs provide context through headlines and brief text around the charts, helping readers understand the data – for example, reporting on air quality, subway ridership, or rat control progress in New York City. These are focused applications of LLMs, with full disclosure of AI involvement. It’s not in-depth investigative journalism, but it’s a helpful, automated way to monitor city trends, demonstrating AI’s potential to augment, rather than replace, traditional news print.

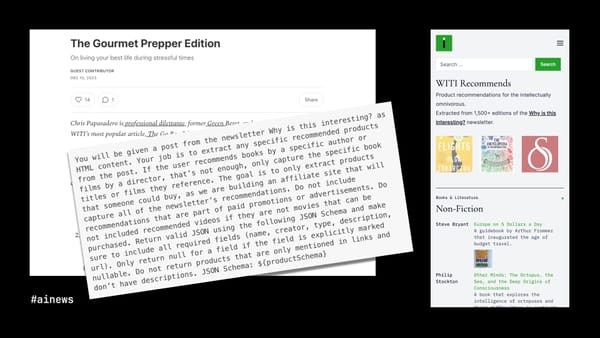

WITI Recommends: Structuring Unstructured Recommendations

“Why is this interesting?” (WITI) is a popular daily newsletter with guest writers. Each edition often mentions recommended products. Noah Brier, a WITI co-founder, wanted to collect these recommendations.

WITI Recommends product recommendations AI

WITI Recommends product recommendations AI

The challenge was that these “recommendations” were embedded within unstructured prose. Some links were to products writers disliked or were not for sale. Noah used a tailored GPT-4 prompt to extract and classify product recommendations from 1,500 newsletter editions, creating a display-ready database. This highlights that LLMs are not just for writing; their strength lies in structuring unstructured text, a valuable tool for modern digital journalism, even if seemingly removed from the traditional workflows of news print.

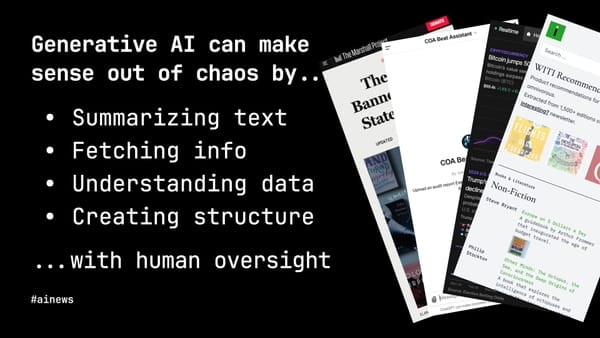

Generative AI: Making Sense of Chaos

These examples illustrate generative AI’s promise for journalism and other fields. LLMs are valuable tools for summarizing text, extracting information, understanding data, and creating structure from the messy reality of everyday life.

Generative AI sense of chaos

Generative AI sense of chaos

However, human oversight is crucial: guiding summaries, verifying results, training bots, and designing the overall system. In all successful applications, humans remain central – guiding and validating AI’s contributions. This approach ensures that AI enhances, rather than diminishes, the quality and integrity of journalism, moving beyond the limitations and sometimes inaccuracies that can be associated with purely automated news print generation.

These examples, I hope, are as inspiring to you as they are to me. Here is my contact information. If you are working on similar AI-journalism projects, I’d love to hear from you.